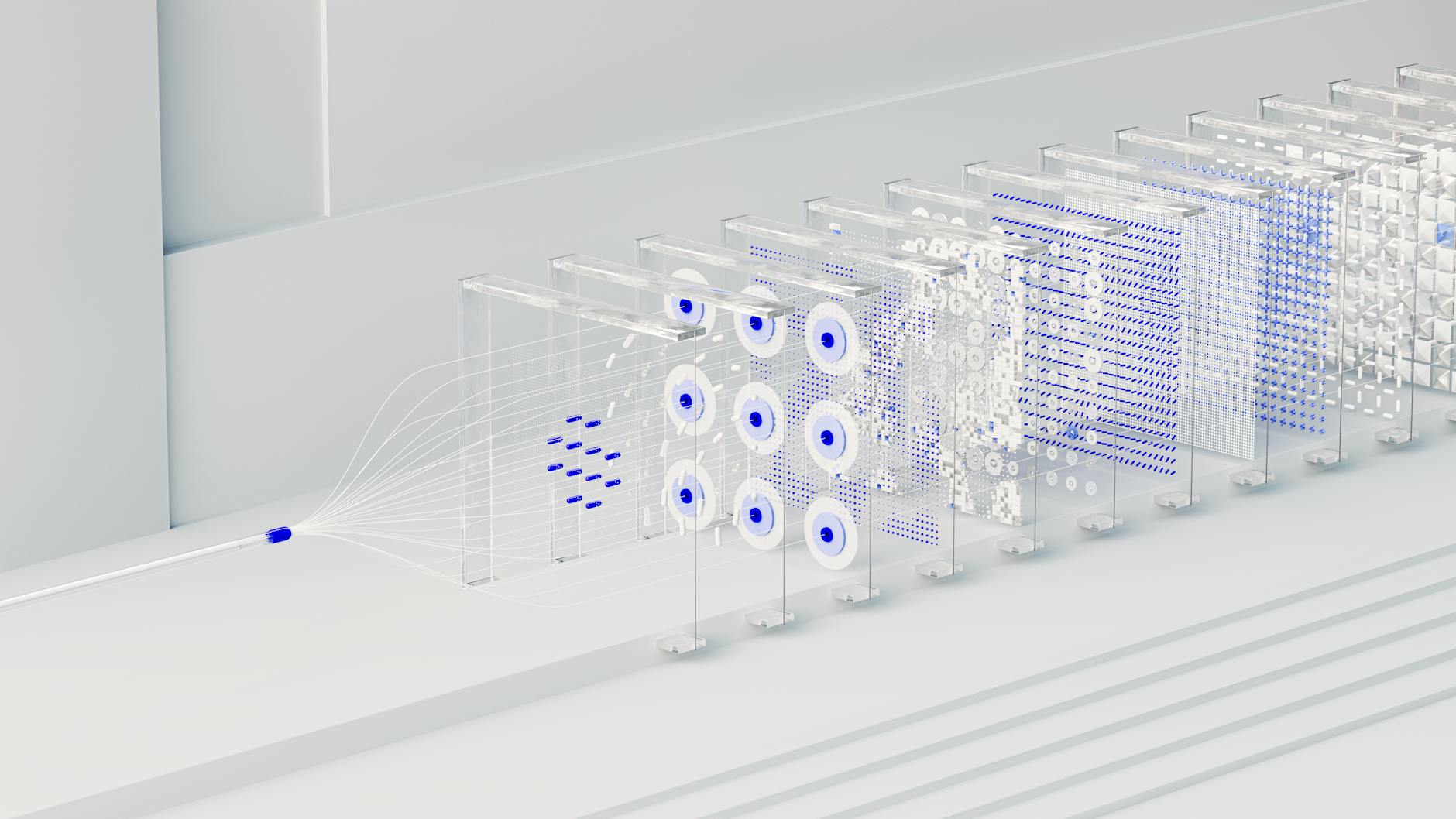

Photo by Google DeepMind on Pexels

Introduction to Mitigating Bias in AI

As AI continues to permeate every aspect of our lives, from virtual assistants to medical diagnosis, the issue of bias in AI has become a pressing concern. Bias in AI refers to the phenomenon where AI systems produce discriminatory or unfair outcomes, often perpetuating existing social and cultural biases. This can have serious consequences, ranging from unfair treatment of certain groups to perpetuation of harmful stereotypes. In this article, we will explore the top 10 best practices for mitigating bias in AI, providing actionable tips and real-world examples to help professionals develop fair and transparent AI systems.Understanding Bias in AI

Before we dive into the best practices, it's essential to understand the types of bias that can occur in AI. There are several types of bias, including:- Selection bias: occurs when the data used to train the AI system is not representative of the population it will be applied to

- Confirmation bias: occurs when the AI system is designed to confirm pre-existing hypotheses or biases

- Anchoring bias: occurs when the AI system relies too heavily on a single piece of information or data point

- Availability heuristic: occurs when the AI system overestimates the importance of vivid or memorable information

# Real-World Examples of Bias in AI

To illustrate the issue of bias in AI, let's consider a few real-world examples:- In 2018, Amazon's AI-powered recruitment tool was found to be biased against female candidates, downgrading resumes that included words like "women's" or "girls'."

- A study found that a popular facial recognition system was more accurate for white faces than for black faces, with an error rate of 0.8% for white faces compared to 34.7% for black faces.

- A language translation system was found to be biased against certain languages, with some languages being more prone to errors than others.

Top 10 Best Practices for Mitigating Bias in AI

So, how can we mitigate bias in AI? Here are the top 10 best practices:- 1. Diverse and Representative Data: Ensure that the data used to train the AI system is diverse and representative of the population it will be applied to. This can involve collecting data from a variety of sources, including underrepresented groups.

- 2. Data Preprocessing: Preprocess the data to remove any sensitive or unnecessary information that could perpetuate bias. This can involve techniques such as data normalization, feature scaling, and data transformation.

- 3. Fairness Metrics: Use fairness metrics to evaluate the performance of the AI system and detect any biases. This can involve metrics such as demographic parity, equalized odds, and calibration.

- 4. Regular Auditing: Regularly audit the AI system to detect any biases or discriminatory outcomes. This can involve techniques such as sensitivity analysis, robustness analysis, and explainability techniques.

- 5. Human Oversight: Implement human oversight and review processes to detect and correct any biases or errors. This can involve techniques such as human-in-the-loop feedback, human-centered design, and participatory design.

- 6. Explainability Techniques: Use explainability techniques to provide insights into the decision-making process of the AI system. This can involve techniques such as feature importance, partial dependence plots, and SHAP values.

- 7. Transparency: Provide transparency into the AI system, including information about the data used, the algorithms employed, and the decision-making process. This can involve techniques such as model interpretability, model explainability, and model transparency.

- 8. Inclusive Design: Design the AI system with inclusivity in mind, taking into account the needs and perspectives of diverse users. This can involve techniques such as co-design, participatory design, and human-centered design.

- 9. Continuous Monitoring: Continuously monitor the AI system for any biases or discriminatory outcomes, and take corrective action when necessary. This can involve techniques such as real-time monitoring, continuous evaluation, and ongoing improvement.

- 10. Education and Training: Educate and train developers, users, and stakeholders about the importance of mitigating bias in AI, and provide them with the skills and knowledge needed to develop fair and transparent AI systems.

# Actionable Tips for Implementing Best Practices

To help you implement these best practices, here are some actionable tips:- Use open-source libraries and frameworks that provide built-in support for fairness and transparency, such as Fairlearn, AI Fairness 360, and TensorFlow Fairness.

- Implement data preprocessing techniques such as data normalization, feature scaling, and data transformation to remove sensitive or unnecessary information.

- Use fairness metrics such as demographic parity, equalized odds, and calibration to evaluate the performance of the AI system.

- Implement human oversight and review processes to detect and correct any biases or errors.

- Use explainability techniques such as feature importance, partial dependence plots, and SHAP values to provide insights into the decision-making process of the AI system.

Code Snippets for Mitigating Bias in AI

To illustrate the implementation of these best practices, let's consider a few code snippets: ```python # Import necessary libraries import pandas as pd from sklearn.preprocessing import StandardScaler from sklearn.model_selection import train_test_split from sklearn.ensemble import RandomForestClassifier from sklearn.metrics import accuracy_score, classification_report, confusion_matrix# Load the dataset df = pd.read_csv("data.csv")

# Preprocess the data scaler = StandardScaler() df[["feature1", "feature2"]] = scaler.fit_transform(df[["feature1", "feature2"]])

# Split the data into training and testing sets X_train, X_test, y_train, y_test = train_test_split(df.drop("target", axis=1), df["target"], test_size=0.2, random_state=42)

# Train a random forest classifier clf = RandomForestClassifier(n_estimators=100, random_state=42) clf.fit(X_train, y_train)

# Evaluate the performance of the classifier y_pred = clf.predict(X_test) print("Accuracy:", accuracy_score(y_test, y_pred)) print("Classification Report:") print(classification_report(y_test, y_pred)) print("Confusion Matrix:") print(confusion_matrix(y_test, y_pred)) ``` This code snippet illustrates the implementation of data preprocessing, model training, and model evaluation using fairness metrics.

Post a Comment